Validation & Metrics

Validation & Metrics

Evaluating and measuring model performance systematically.

Learning Outcomes

By completing this topic, you will:

- Implement cross-validation strategies

- Choose appropriate metrics for your problem

- Avoid common evaluation mistakes

- Design validation for production systems

Visual Guides

Prerequisites

- Supervised Learning concepts

- Classification and Regression basics

- Understanding of overfitting

Key Concepts

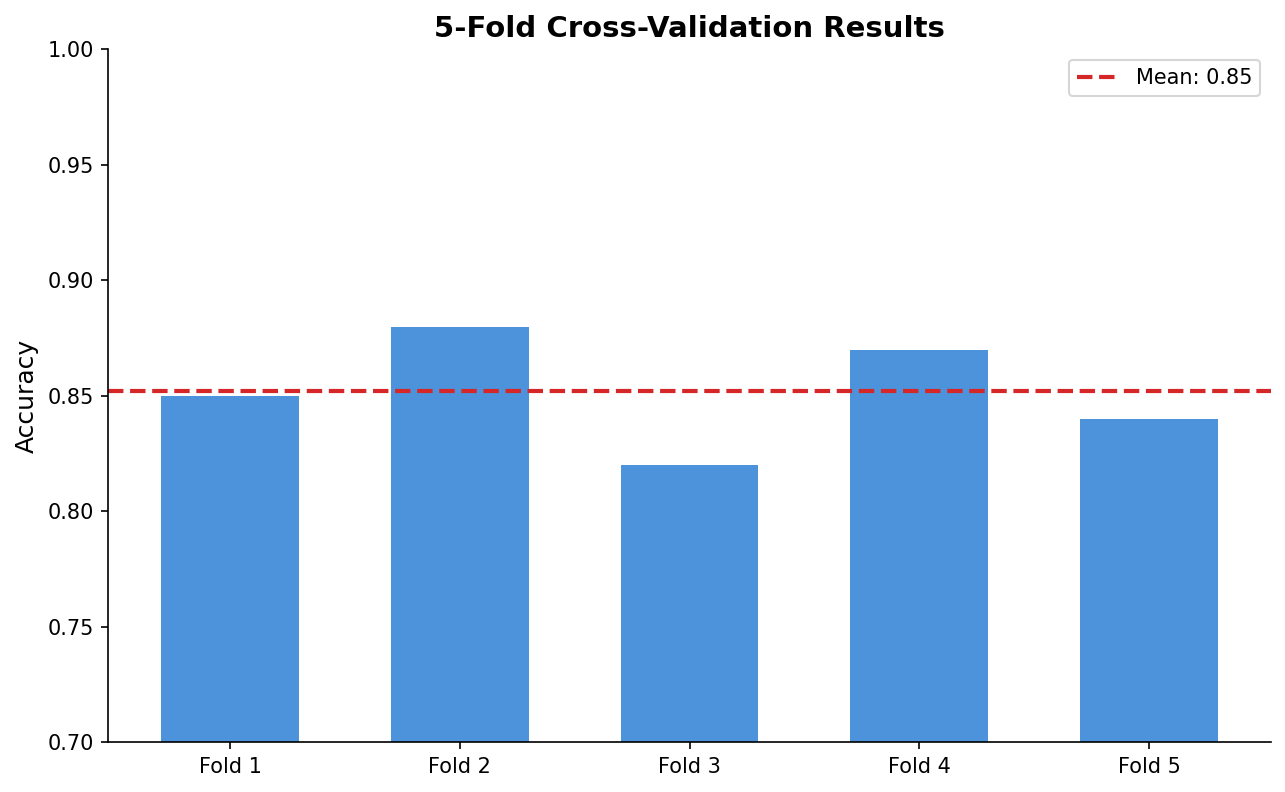

Cross-Validation

Robust model evaluation:

- K-fold: Split data into K parts, rotate test set

- Stratified: Preserve class distribution in folds

- Time series: Respect temporal ordering

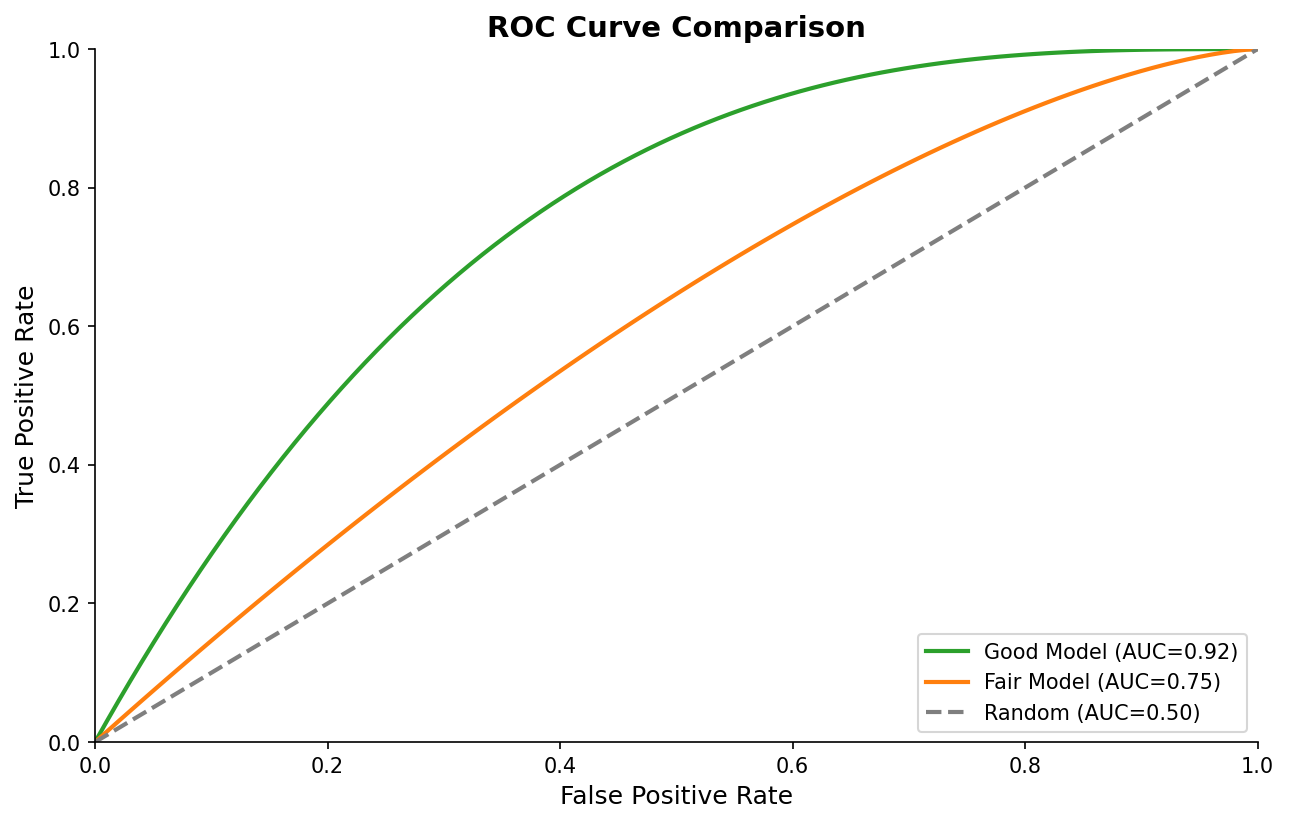

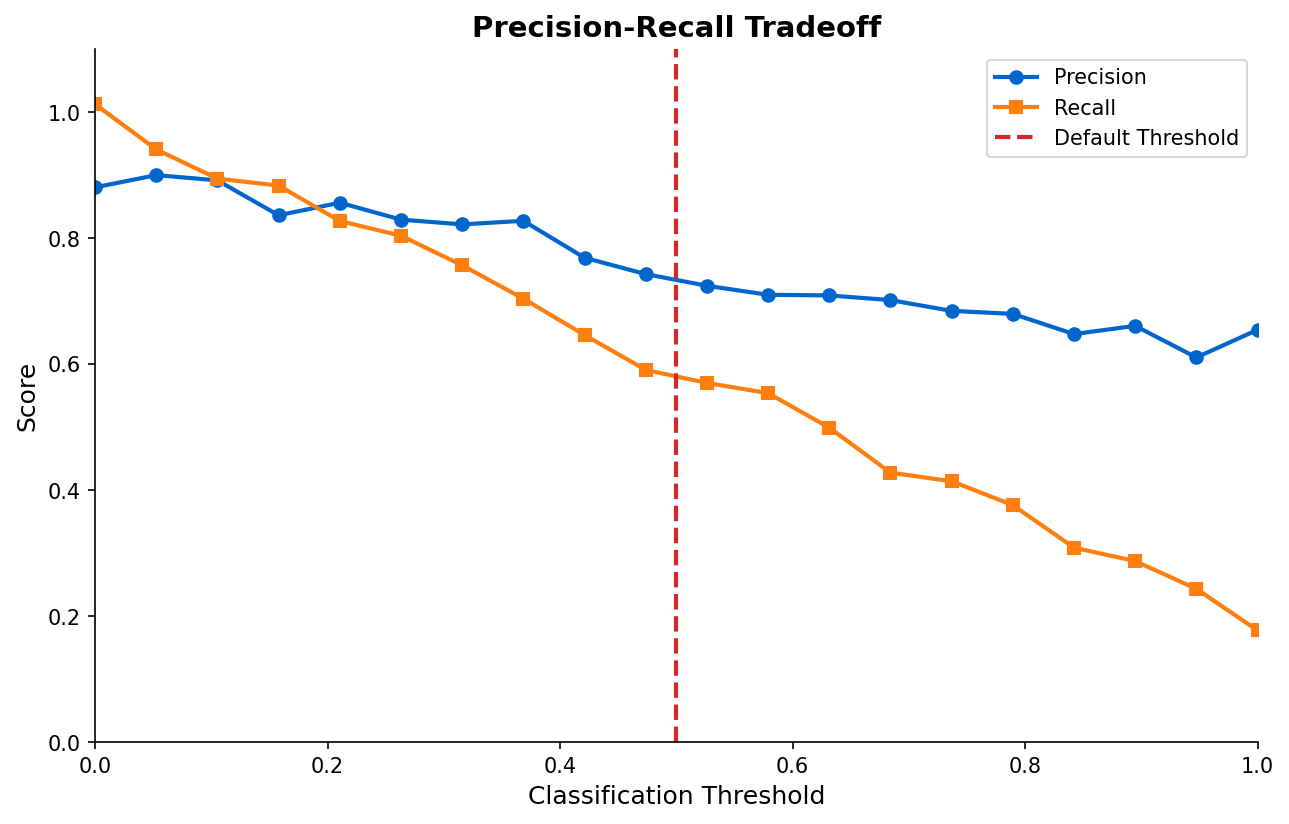

Classification Metrics

| Metric | Best For |

|---|---|

| Accuracy | Balanced classes |

| Precision | Cost of false positives high |

| Recall | Cost of false negatives high |

| F1-score | Balance precision and recall |

| ROC-AUC | Overall discrimination |

Regression Metrics

- MSE/RMSE: Penalizes large errors

- MAE: Robust to outliers

- R-squared: Explained variance

When to Use

Validation depth depends on:

- Model complexity and risk

- Data size and variability

- Deployment requirements

- Regulatory constraints

Common Pitfalls

- Using accuracy on imbalanced data

- Data leakage during preprocessing

- Optimizing wrong metric for business

- Not holding out final test set

- Ignoring variance across folds

(c) Joerg Osterrieder 2025