Responsible AI

Responsible AI

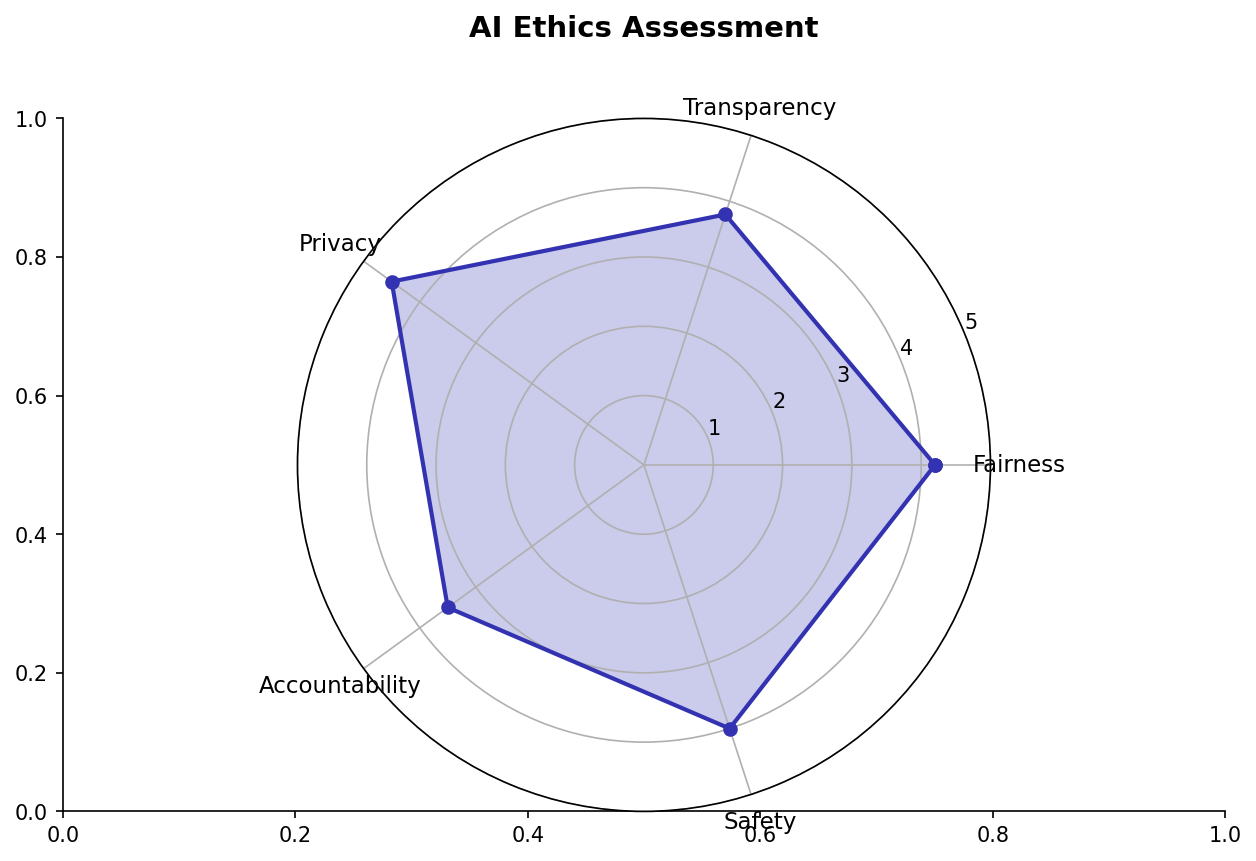

Building AI systems that are fair, transparent, and accountable.

Learning Outcomes

By completing this topic, you will:

- Identify sources of bias in ML systems

- Apply fairness metrics and mitigation strategies

- Use SHAP for model explanations

- Design for accountability and transparency

Visual Guides

Prerequisites

- Classification and Supervised Learning

- Understanding of model evaluation

- Basic ethics concepts

Key Concepts

Bias in ML Systems

Sources of unfairness:

- Data bias: Unrepresentative training data

- Algorithmic bias: Model amplifies existing patterns

- Measurement bias: Flawed outcome definitions

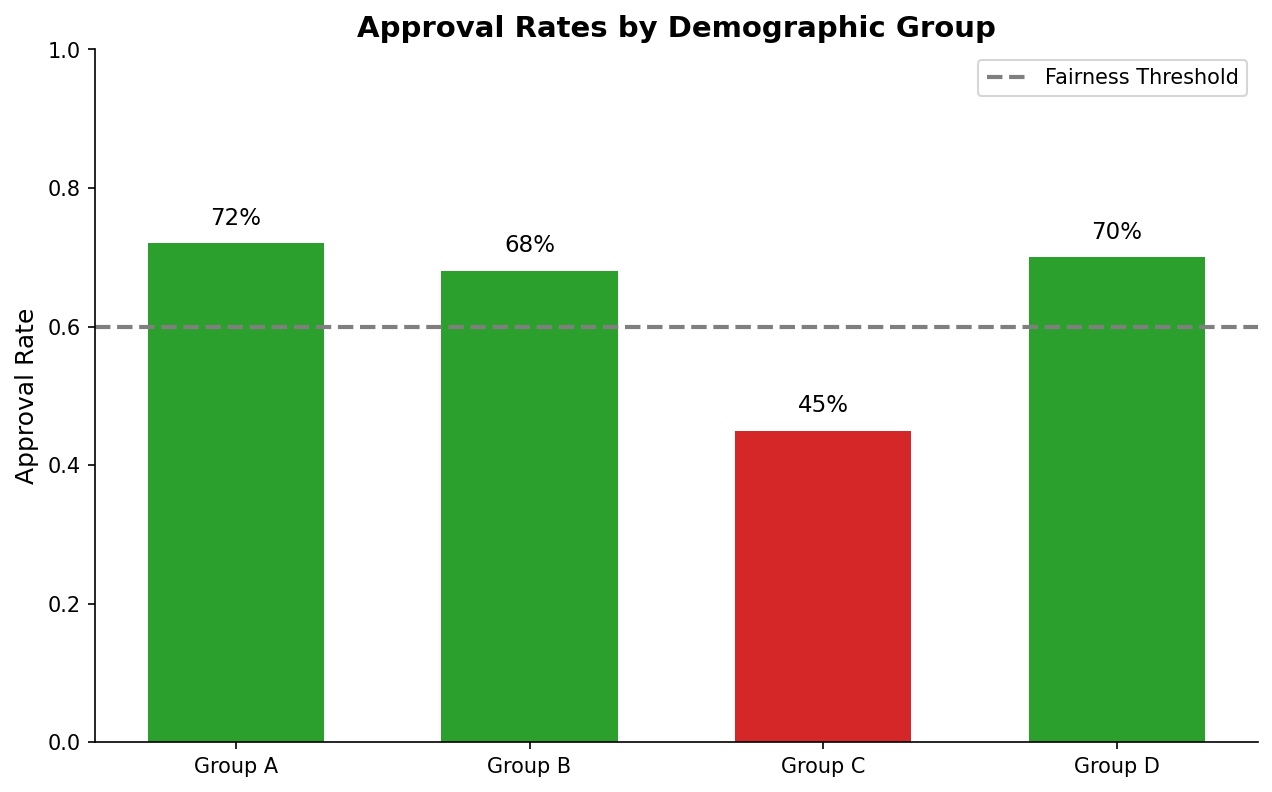

Fairness Metrics

- Demographic parity: Equal positive rates across groups

- Equalized odds: Equal TPR and FPR across groups

- Individual fairness: Similar people get similar predictions

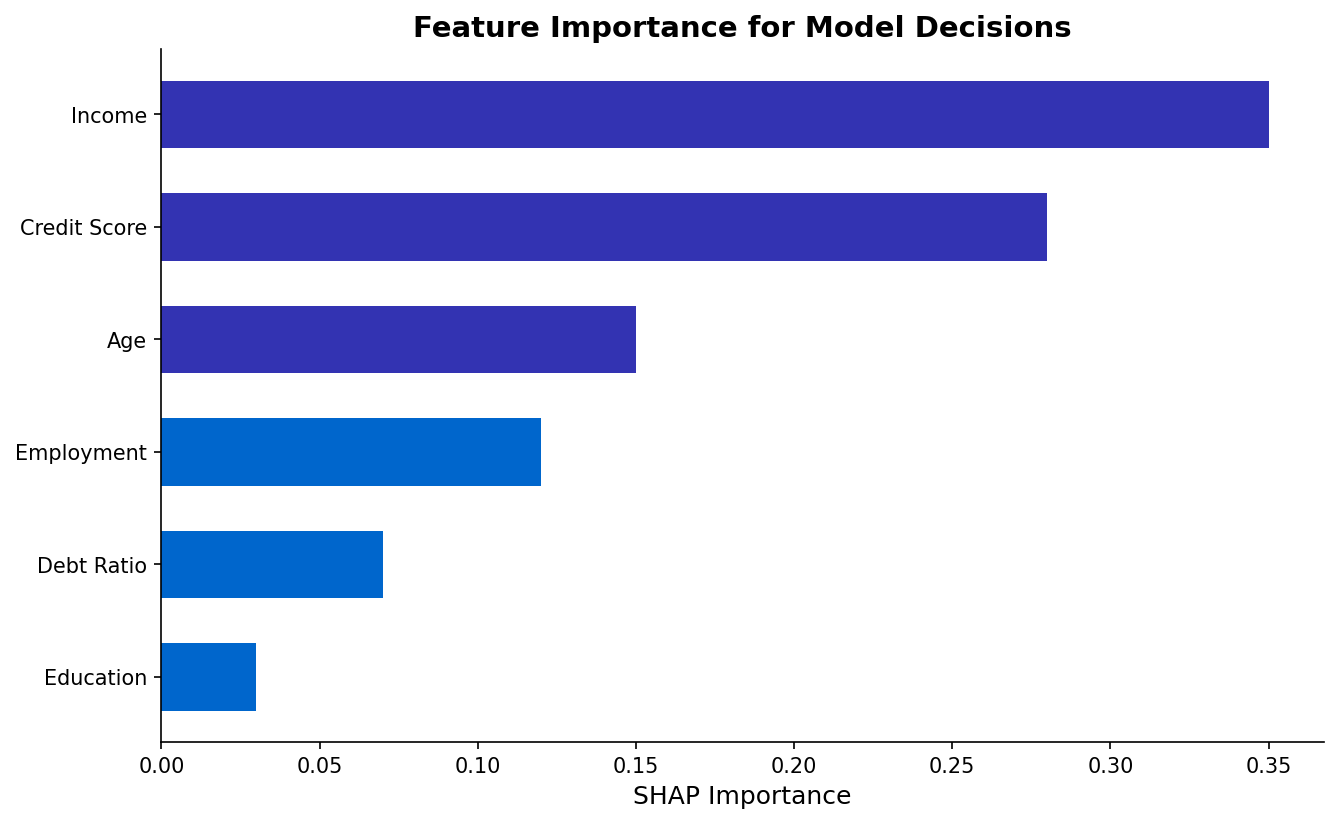

Explainability with SHAP

- Feature importance at individual and global levels

- Additive explanations based on game theory

- Visualization of feature contributions

When to Use

Responsible AI practices are essential when:

- Decisions affect people’s lives

- Protected attributes are involved

- Regulatory compliance is required

- Building trust is important

Common Pitfalls

- Treating fairness as a one-time check

- Optimizing for single fairness metric

- Ignoring intersectionality

- Conflating correlation with discrimination

- Not involving stakeholders in design

(c) Joerg Osterrieder 2025