Neural Networks

Neural Networks

Deep learning architectures for complex pattern recognition.

Learning Outcomes

By completing this topic, you will:

- Explain how neurons and layers process information

- Describe forward and backward propagation

- Choose appropriate activation functions

- Understand when depth helps (and when it doesn’t)

Visual Guides

Prerequisites

- Supervised Learning concepts

- Basic calculus (derivatives, chain rule)

- Matrix operations

Key Concepts

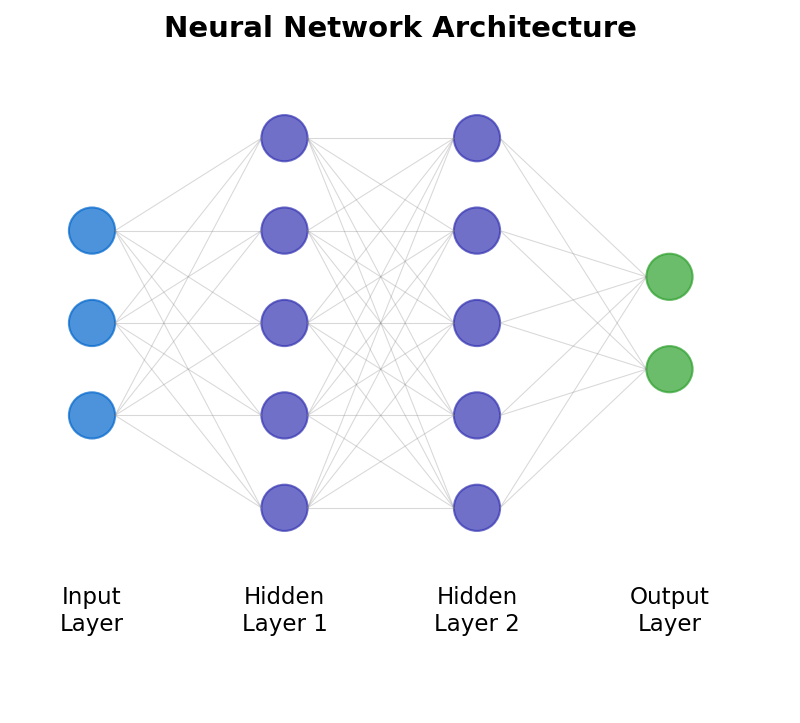

Network Architecture

- Input layer: Receives features

- Hidden layers: Learn representations

- Output layer: Produces predictions

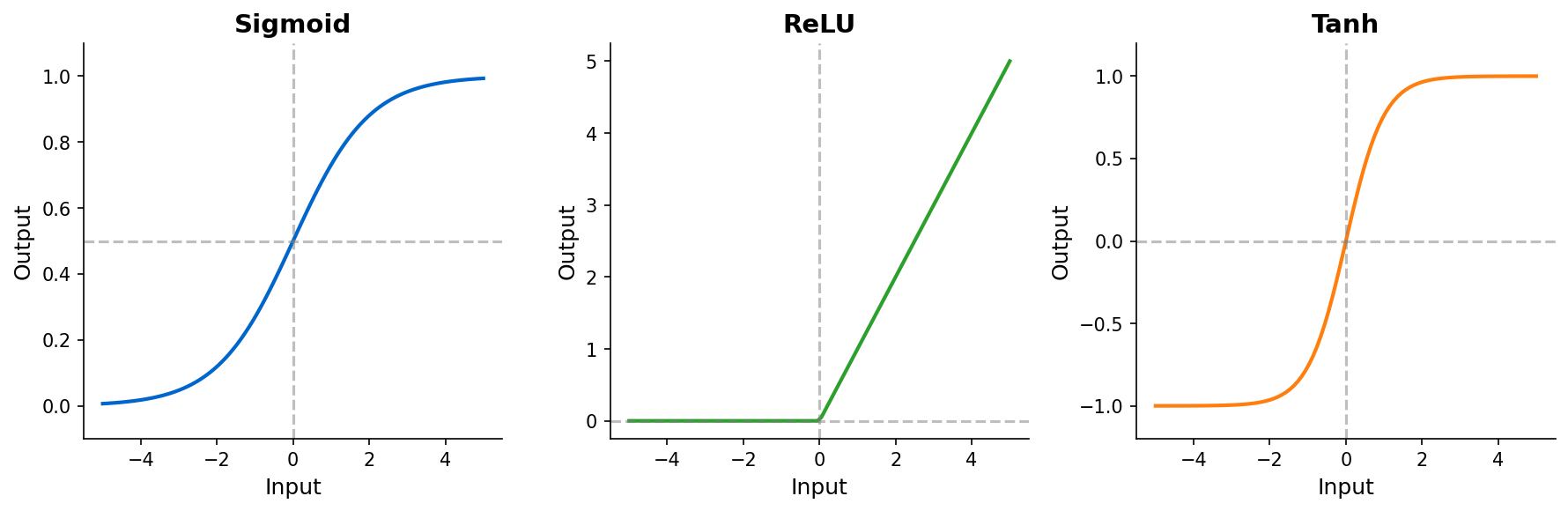

Activation Functions

- ReLU: Default for hidden layers (fast, avoids vanishing gradients)

- Sigmoid: Binary classification output

- Softmax: Multi-class classification output

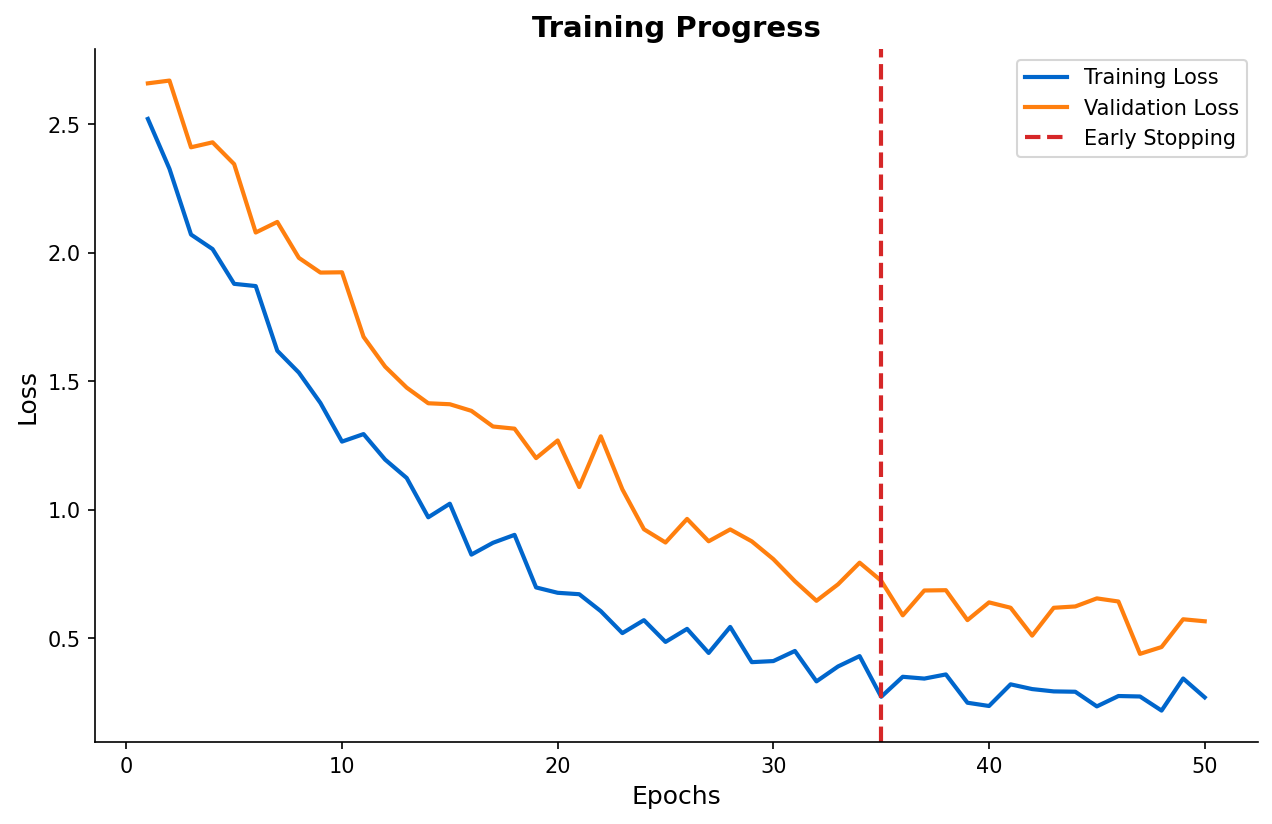

Training Process

- Forward pass: Compute predictions

- Loss calculation: Measure error

- Backward pass: Compute gradients

- Update weights: Gradient descent

Common Architectures

- Feedforward: Basic fully-connected networks

- CNN: Image and spatial data

- RNN/LSTM: Sequential and time series data

When to Use

Neural networks excel when:

- You have large amounts of data

- Features require complex transformations

- Interpretability is less critical

- Computational resources are available

Common Pitfalls

- Too few training examples for network size

- Not normalizing inputs

- Learning rate too high or too low

- Vanishing/exploding gradients in deep networks

- Overfitting without regularization (dropout, weight decay)

(c) Joerg Osterrieder 2025