Generative AI

Generative AI

Creating new content with large language models and AI systems.

Learning Outcomes

By completing this topic, you will:

- Understand transformer architecture fundamentals

- Apply effective prompt engineering techniques

- Integrate LLMs into applications

- Evaluate and improve generated outputs

Visual Guides

Prerequisites

- Neural Networks concepts

- Basic understanding of attention mechanisms

- API usage experience

Key Concepts

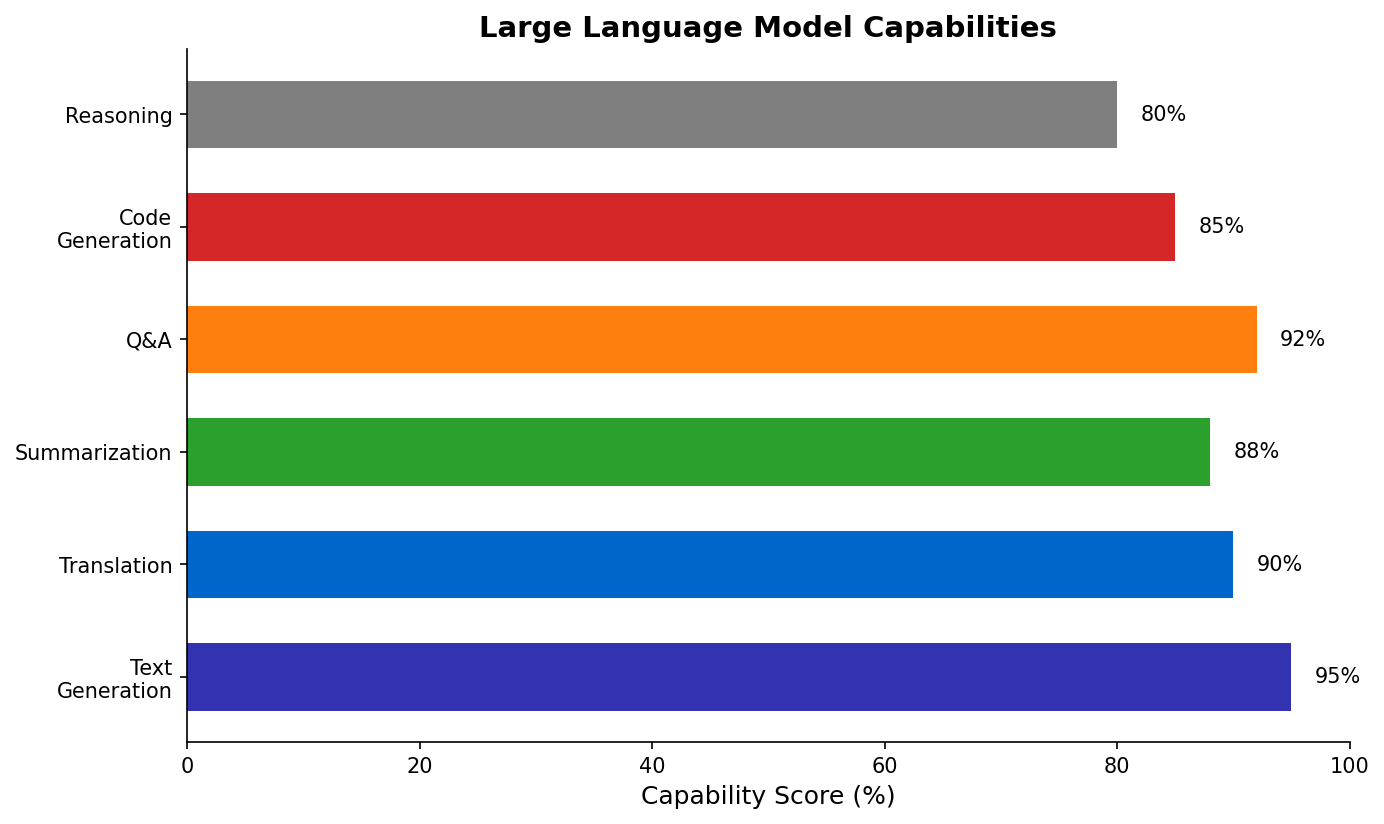

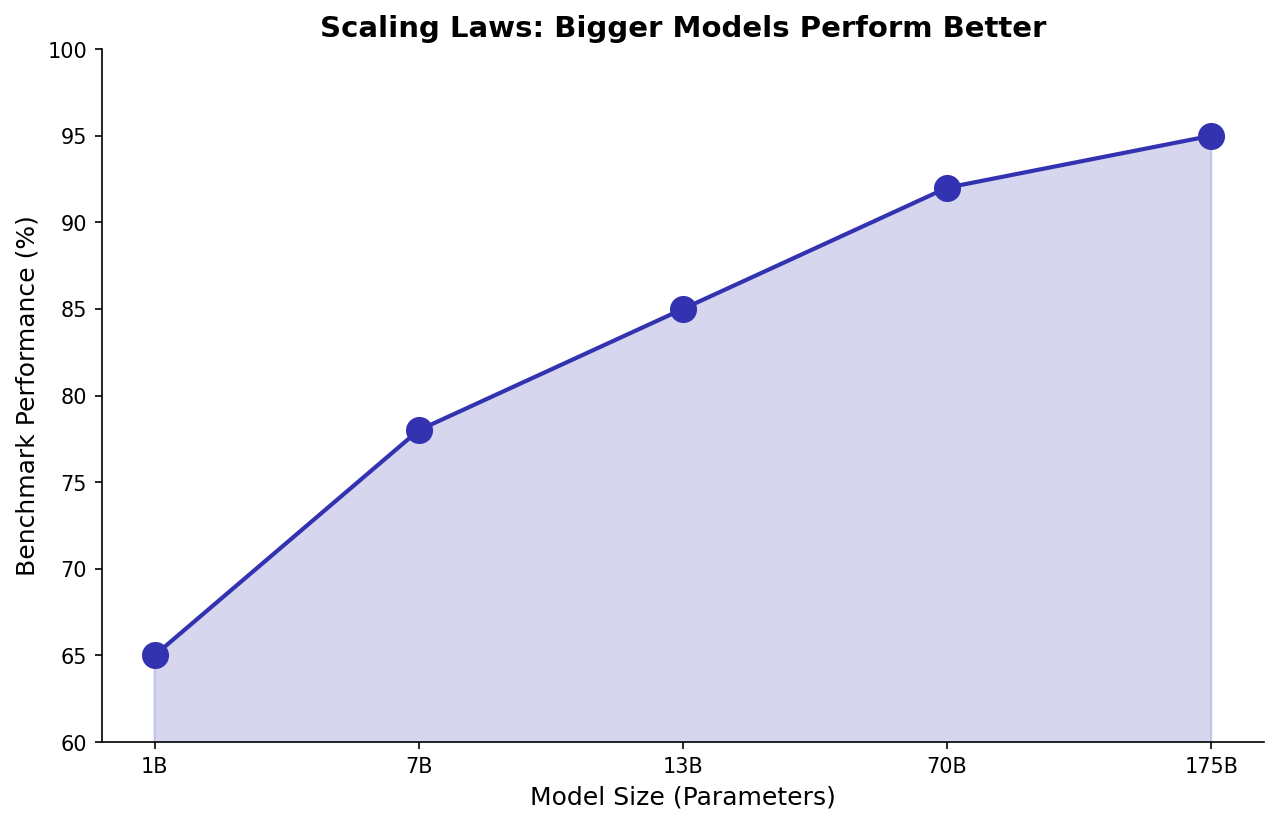

Large Language Models

- Transformers: Attention-based architecture

- Pre-training: Learning from massive text corpora

- Fine-tuning: Adapting to specific tasks

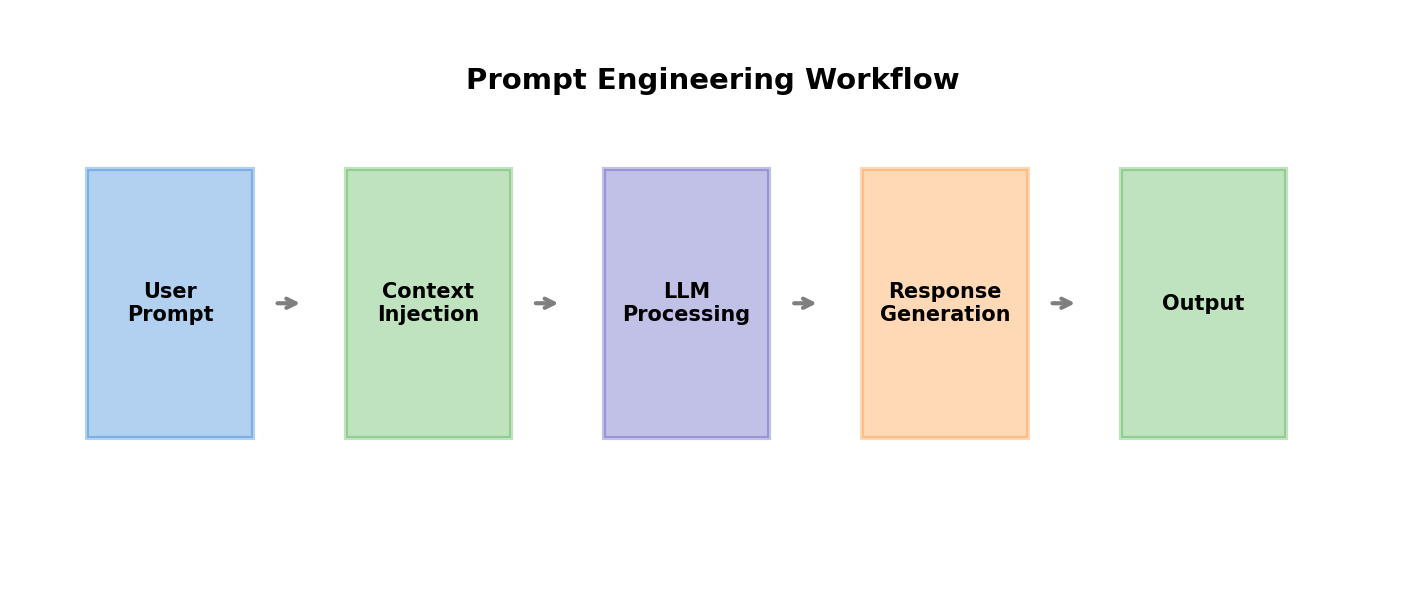

Prompt Engineering

Techniques for better outputs:

- Zero-shot: Direct instructions

- Few-shot: Include examples

- Chain-of-thought: Step-by-step reasoning

- System prompts: Set behavior and constraints

Practical Applications

- Content generation and summarization

- Code assistance and debugging

- Document analysis and extraction

- Creative ideation support

When to Use

Generative AI excels for:

- Tasks requiring language understanding

- Creative content generation

- Rapid prototyping of ideas

- Augmenting human capabilities

Avoid when:

- Exact numerical precision required

- Full verifiability needed

- Domain expertise is critical

Common Pitfalls

- Hallucinations (confident but wrong outputs)

- Prompt injection vulnerabilities

- Over-reliance without verification

- Ignoring token limits and costs

- Not testing edge cases

(c) Joerg Osterrieder 2025