A/B Testing

A/B Testing

Statistical experimentation for data-driven decisions.

Learning Outcomes

By completing this topic, you will:

- Design valid A/B experiments

- Calculate required sample sizes

- Analyze results with statistical rigor

- Avoid common experimentation pitfalls

Visual Guides

Prerequisites

- Basic statistics (mean, variance)

- Hypothesis testing concepts

- Understanding of p-values and confidence intervals

Key Concepts

Experiment Design

- Define hypothesis and metrics

- Calculate sample size for power

- Randomize assignment

- Run experiment for planned duration

- Analyze and interpret results

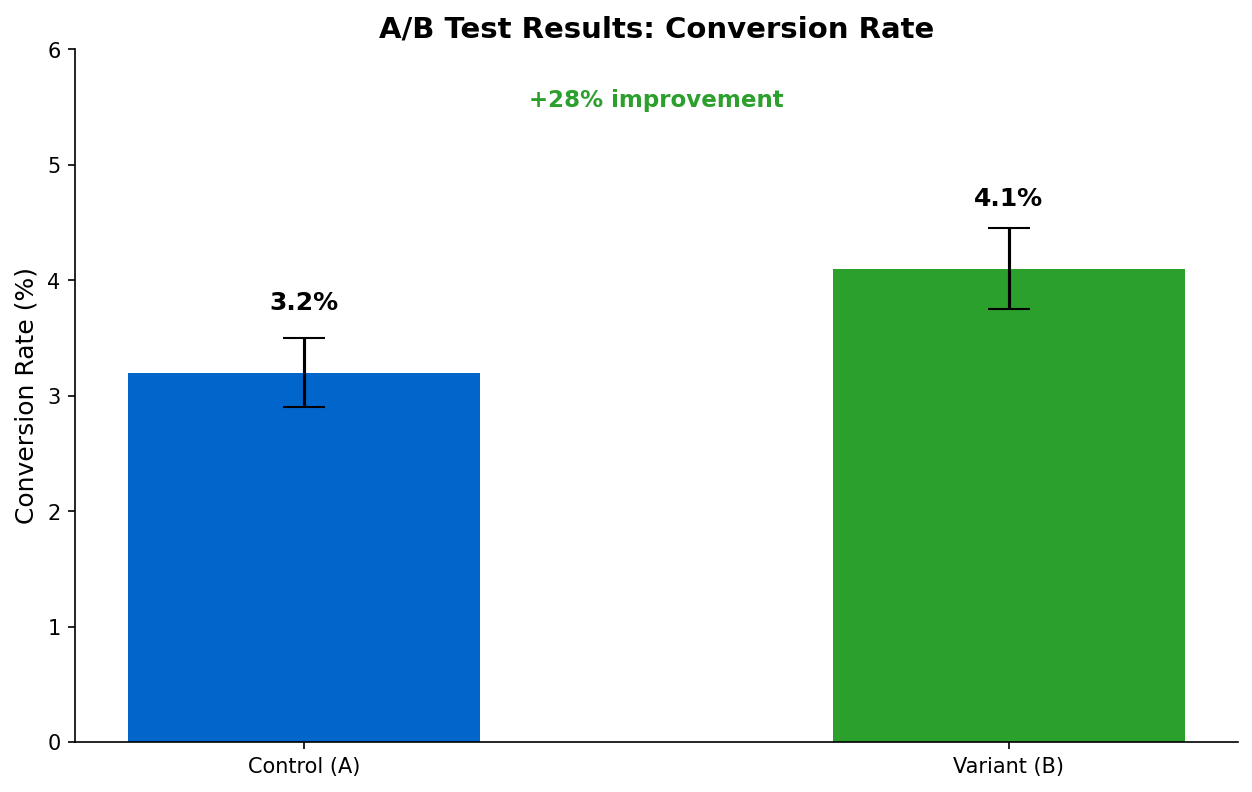

Statistical Analysis

- Null hypothesis: No difference between variants

- p-value: Probability of result under null

- Confidence interval: Range of plausible effects

- Effect size: Magnitude of difference

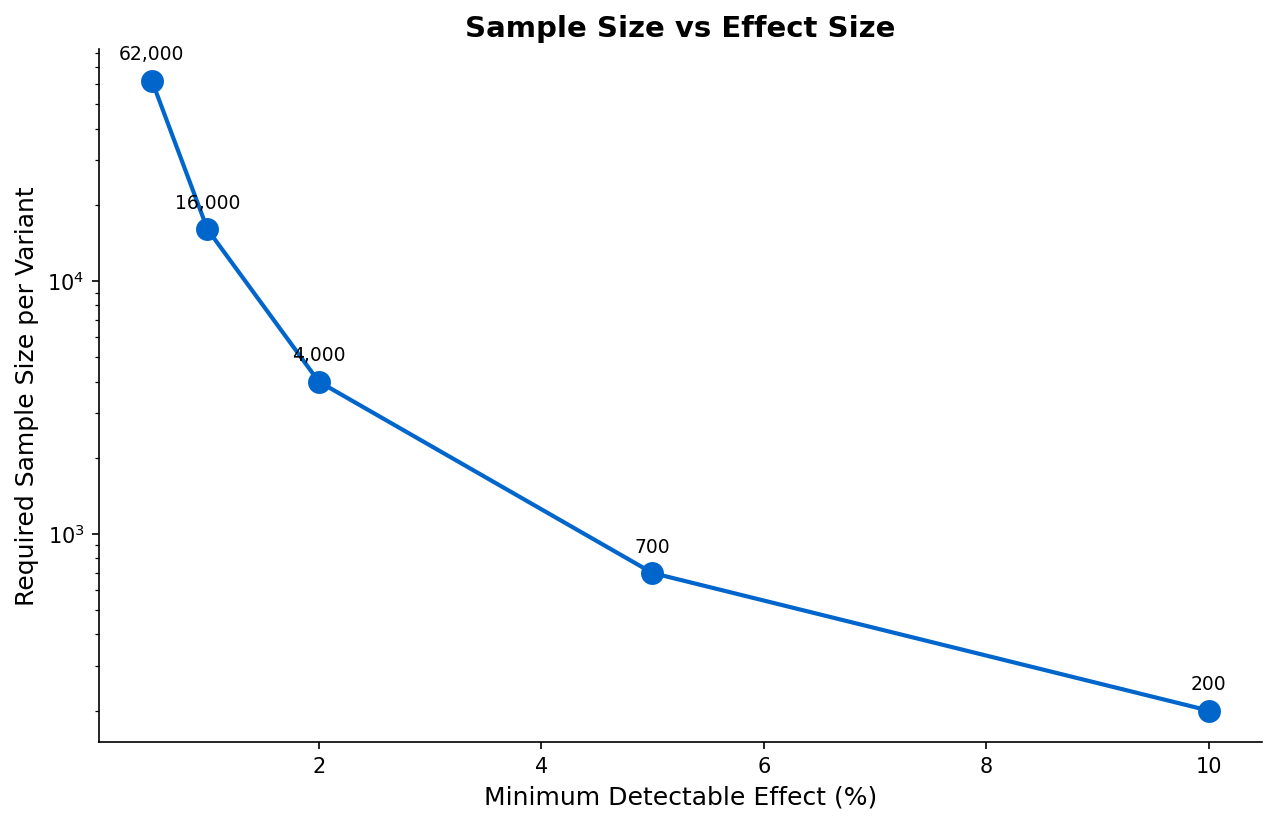

Sample Size Calculation

Depends on:

- Minimum detectable effect (MDE)

- Statistical power (typically 80%)

- Significance level (typically 5%)

- Baseline conversion rate

When to Use

A/B testing is appropriate when:

- Changes can be randomized fairly

- Sufficient traffic for statistical power

- Metric is measurable and relevant

- Time allows for proper experiment

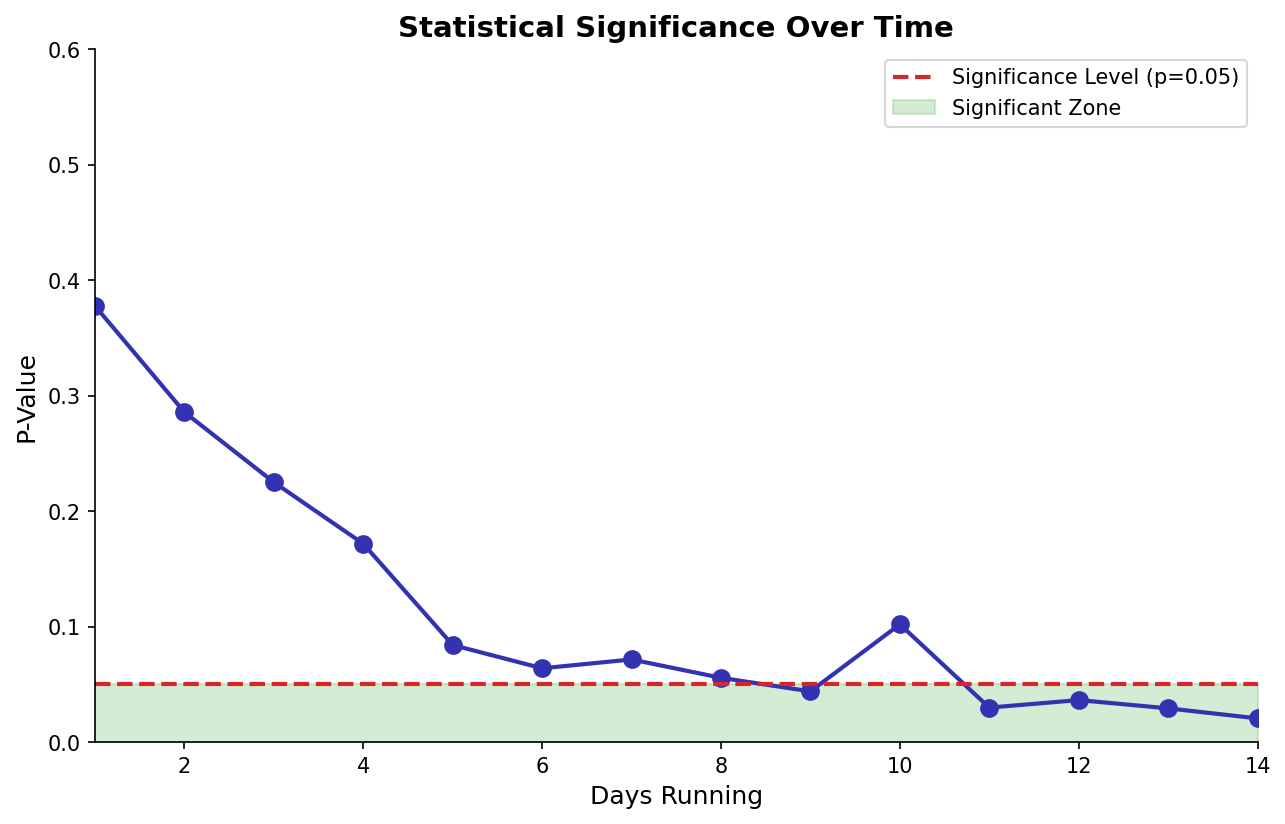

Common Pitfalls

- Stopping experiments early (peeking)

- Running multiple tests without correction

- Ignoring network effects

- Small sample sizes

- Wrong randomization unit

(c) Joerg Osterrieder 2025